Sentimental Senators: A Sentiment Analysis of Tweets Made by US Senators

Gabe Friend and Tim Shinners

)](../../../../post/2020-05-27-sentimental-senators_files/senate.jpg)

Figure 1: (Source: Anadolu Agency)

Over the past few months, the United States has been confronted with healthcare, economic, and social disruptions brought about by the COVID-19 pandemic. In response, government mandated social distancing and shelter-in-place orders have become the norm, as well as large scale economic bailouts the likes of which have not been seen since 2007’s Great Recession. As expected, opinions on these measures have been split, particularly along US political party lines. While plenty of news outlets offer interpretations and research on how opinions surrounding the novel coronavirus and current economic/social situations differ by political party affiliation, we wanted to do our own investigation. In this analysis, we sought to answer a specific question: Over the past four months, how has the online vocabulary of US senators changed in terms of sentiment, and how does this sentiment differ along Democrat and Republican party lines?

To answer this question we decided to look at all of the tweets posted by each US senator’s Twitter account since 1/1/2020 and examine the vocabulary used in them. The decision to use tweets to analyze the sentiment of a senator’s vocabulary was due to three factors; first, tweets are posted very frequently, allowing us to guage how a senator’s rhetoric and vocabulary changed daily in response to current events. Secondly, although the language, syntax, and rhetoric used in tweets by politicians are all relatively formal compared to tweets made by the general public, the colloquial nature of tweets allows politicians to use a more nuanced,informal, and personal vocabularly than would be found in a speech, legislation, or a press release. Lastly, Twitter makes it very easy for users to gather data from its website. To do this, we taught ourselves how to use two R packages: twitteR and rtweet. These packages allowed us to make queries and recieve data sets filled with tweets made by our senators. We also scraped each senator’s name, party, occupation, and other information from Wikipedia. Each senator’s twitter username was scraped from this website. This source was fairly old, and a number of senators had assumed office more recently than that site had been created, so we found the rest of the usernames using this source and manually searching for the rest of the usernames on twitter itself.

In this project we used sentiment analysis. Put concisely, this is a technique where text data (often a word) is classified based on a sentiment, or a group of sentiments, associated with it. For example, the word ‘happy’ could be considered to have a positive sentiment while the word ‘angry’ would then be considered to have a negative sentiment. Sentiment analysis is performed with something called a lexicon, which in this context is essentially a collection of words that were assigned a sentiment at the lexicon’s creation. For this project, after removing common words generally considered to not be useful in sentiment analysis (by referencing a ‘stop words’ lexicon), each word of each tweet was assigned a sentiment of either ‘positive’ or ‘negative’ based on its assigned sentiment in our reference lexicon of choice.

The lexicon we used also provides a corresponding numerical value with each word depending on the word’s sentiment. In this case, all words correspond to a value between negative and positive five inclusive. The more negative the sentiment of a word is, the more negative its corresponding value (e.g., -3 versus -5), and vice versa for positive words. Of course this analysis has some shortcomings; when comparing a group of words to a lexicon, its a possibility that your list of words of interest may contain words not in the lexicon. This can be be a problem as many words that may be commonly considered ‘positive’ or ‘negative’ won’t be assigned a sentiment simply because they lack a lexicon reference. Given this, the results of a sentiment analysis can change based on the lexicon used.

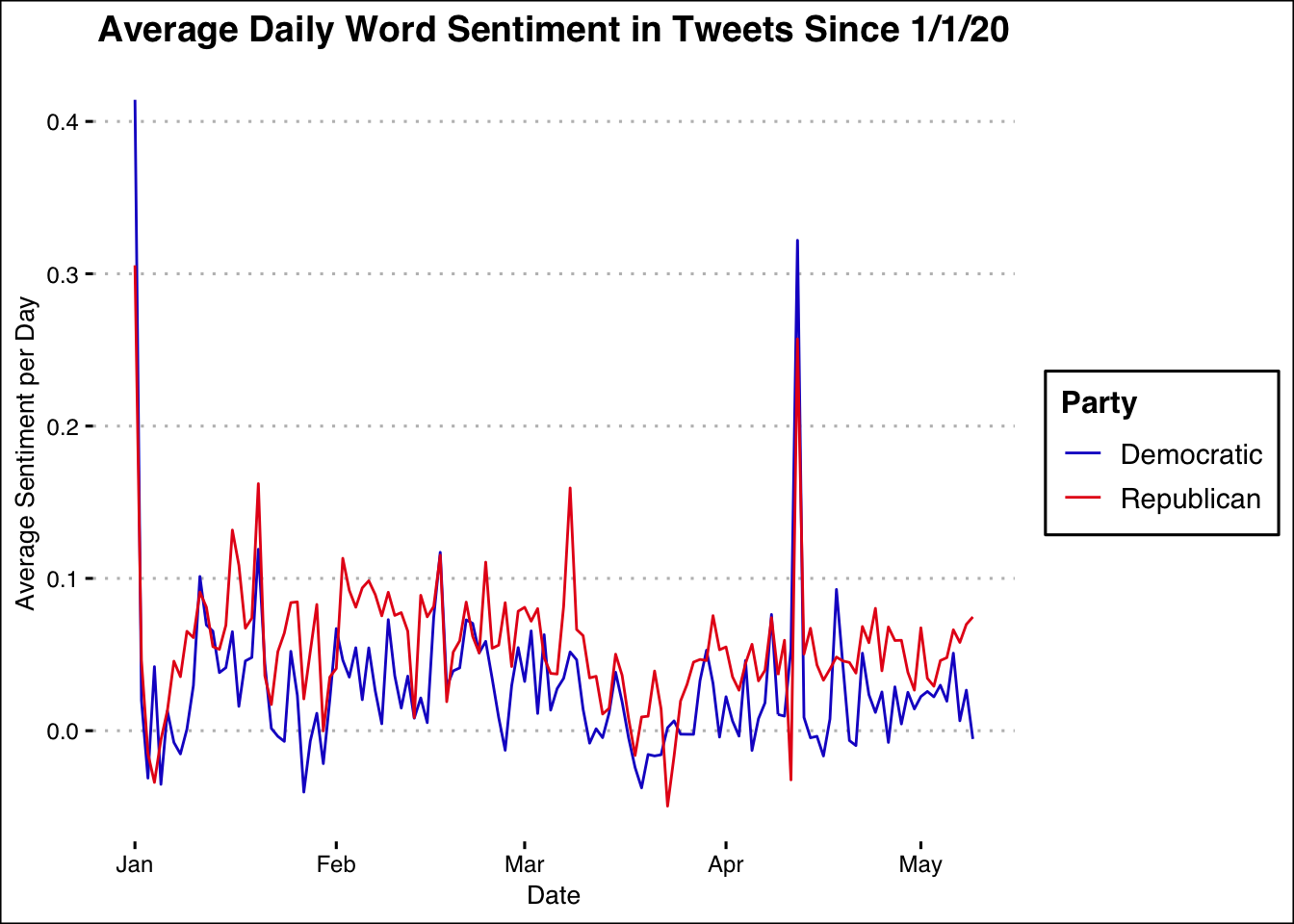

First, we looked at how the overall sentiment of vocabulary used in tweets varied on a daily basis. The plot below shows how the average daily word sentiment found in senators’ tweets differs by their party affiliation and changes over time.

Looking at this graph, a few things jump out. First, for most days it appears that the average sentiment of Republican senators’ tweet vocabularies is more positive than that of Democratic senators. Next, there are approximately four large spikes in average sentiment. The first is at the beginning of January, due to tweets on New Years day which predictably contain overwhelmingly positive sentiment. The next is in mid-January, when the articles of Donald Trump’s impeachment were submitted to the Senate, beginning President Trump’s impeachment trial. An increase in vocabulary sentiment around this time makes sense for GOP senators; using positive words, and praising Donald Trump as President, could be an effective strategy for curtailing support of his impeachment by constituients. At the same time, Democrat senators also demonstrated an uptick in average word sentiment; the use of positive rhetoric and vocabulary could also be useful in garnering support for Trump’s impeachment, depending on how the issue is framed. We also see another big jump in GOP senators’ average daily word sentiment in early February, when Donald Trump was acquitted. In early March there’s a noticeable increase in average daily sentiment for Republican senators, potentially due to attempts at alleviating concerns stemming from the financial market downturns that began in this same time period. In mid-April there’s another large jump in average sentiment for both Republic and Democrat senators. This most likely is a response to former president Barack Obama formally endorsing Joe Biden as the Democratic Party presidential nominee. A consolidation of the Democratic Party, and subsequent positive sentiment stemming from a former democratic leader standing behind a new one, makes sense, but why this corresponds with increased Republican senator sentiment in unclear. One possibility may be that in response to Democrats rallying behind their nominee, Republican senators did the same behind President Donald Trump, using positive vocabulary while doing so.

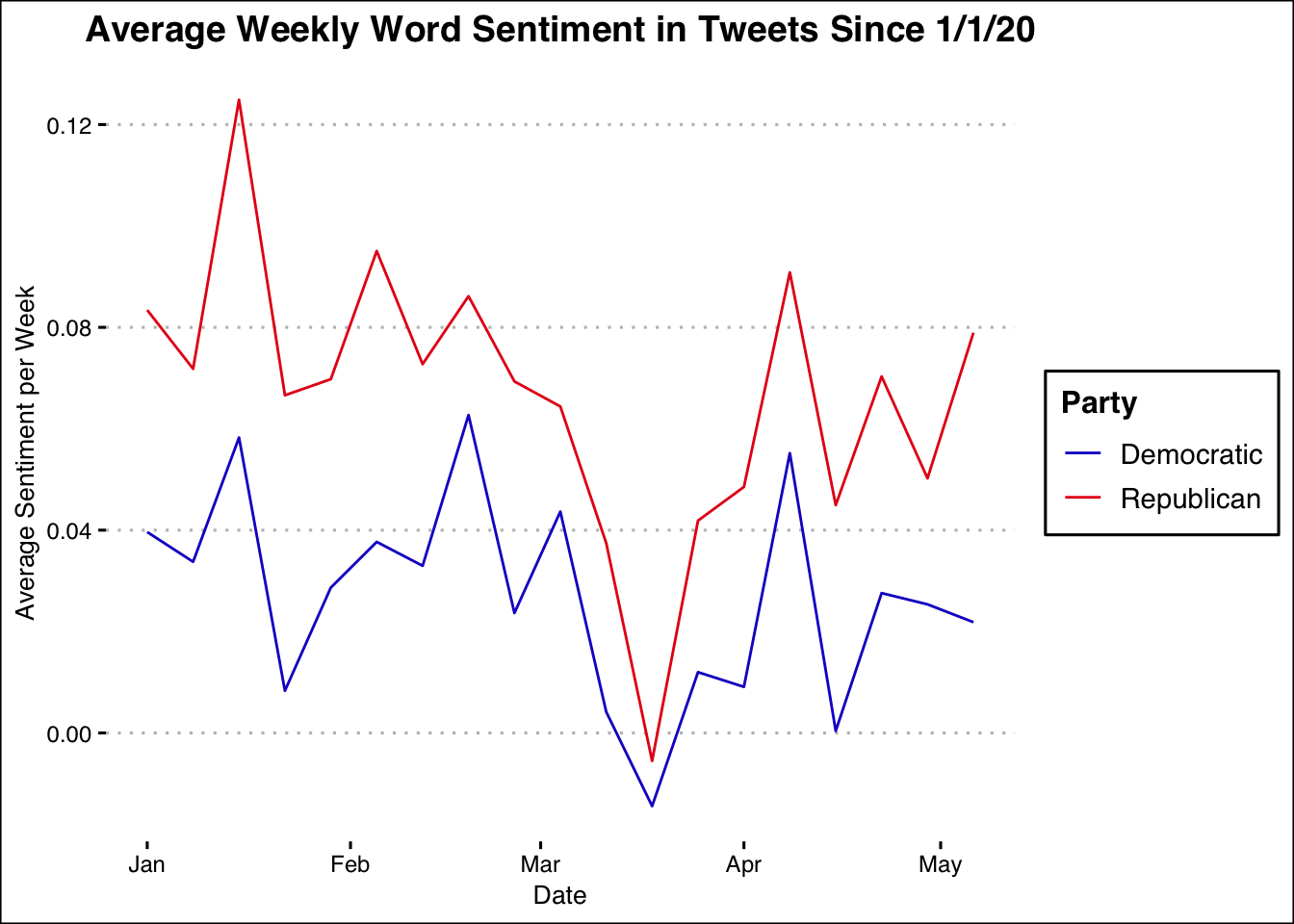

We also looked at weekly averages of sentiment in order to get a better sense of trends over a longer time period. The graph below examines average weekly tweet sentiment rather than daily.

Just as with our first graph, this graph suggests that Republican senators’ tweets usually have a higher average word sentament than those of democratic senators. This graph shows a large drop in average weekly word sentiment for both parties in March, followed by a bi-partisian rise through early April. Exact causes cannot be concluded based on the scope of this analysis, but this rise and fall in sentiment fits into the time period where the domestic COVID-19 crisis reached its peak, and then began to steadily recover.

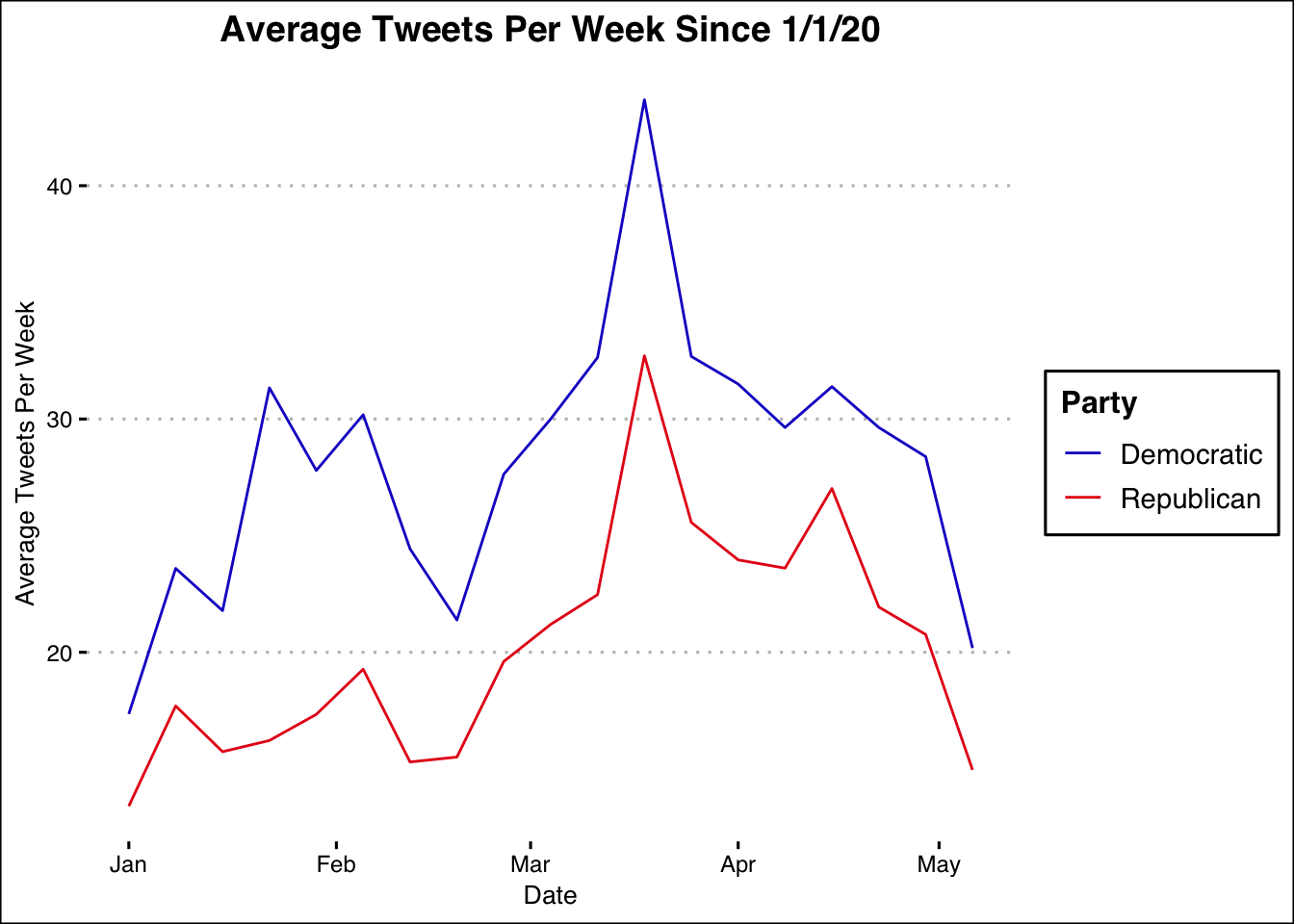

In addition to sentiment differences, we were also curious about the tweeting habits of senators in different parties.

The above graph plots the average tweets per week for each party since 1/1/20. Here it can be seen that on average, democratic senators consistently tweet more per week than republican senators, particularly in February (coinciding with Donald Trump’s impeachment trial). In mid-March, both parties’ senators began tweeting more per week, likely due to COVID-19, stimulus packages, social distancing measures, and general uncertainty in this time period. Interestingly, this peak occurs at about the same time as the massive drop in sentiment in the previous graph. We also observe a large rise then fall in the average number of tweets per week in this graph.

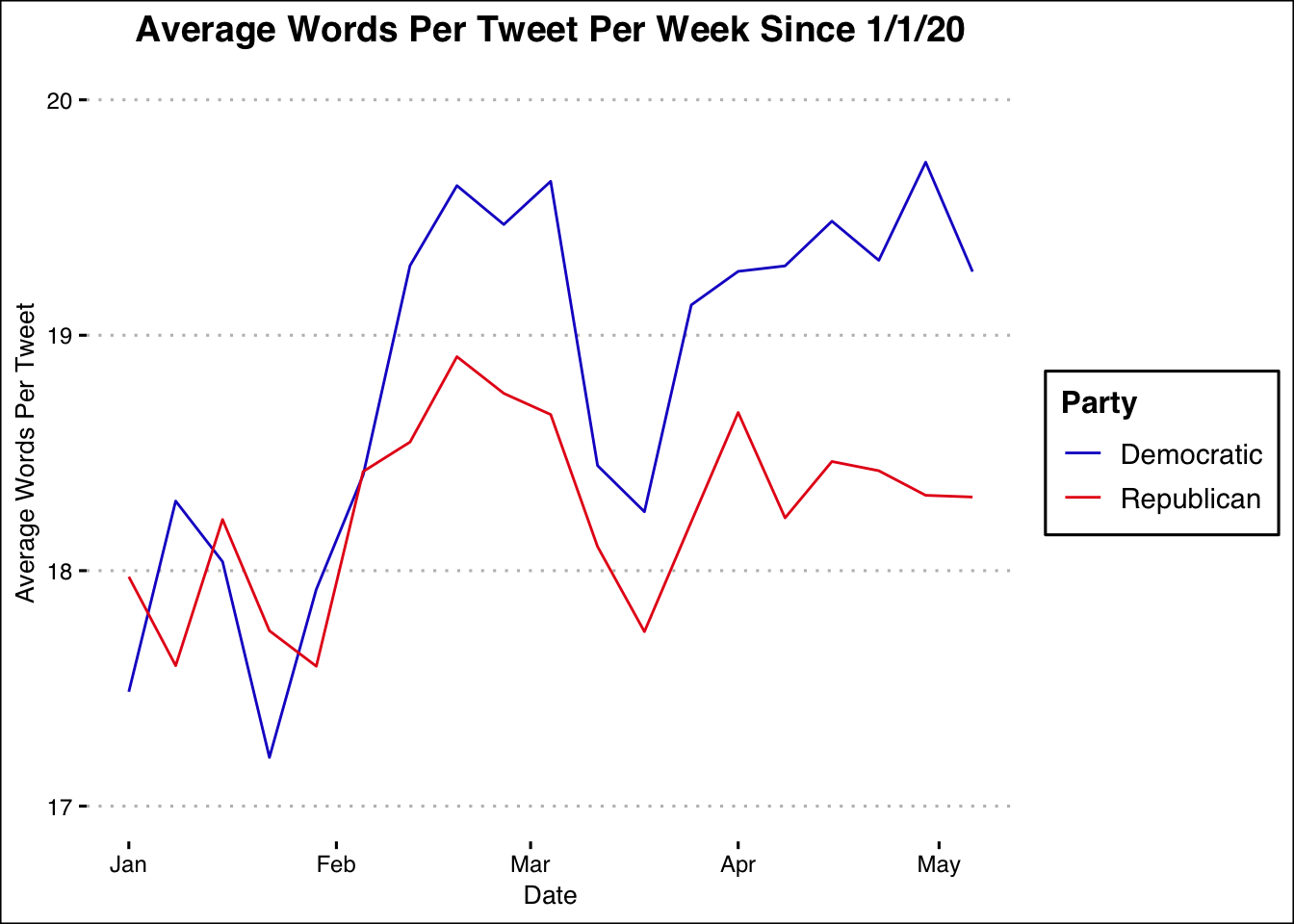

We also looked at the average number of words per tweet within each week by party.

This tells us that Democratic senators for the most part use more words in their tweets than GOP senators, with an especially large discrepency emerging in mid-February and then from April to the present. We can also see that in the previously mentioned March-April period characterized by a fall then rise in sentiment, we can see a fall then rise in average words per tweet, telling us that as the average word sentiment of tweets got more negative, the tweets became shorter and more frequent, and then vice versa.

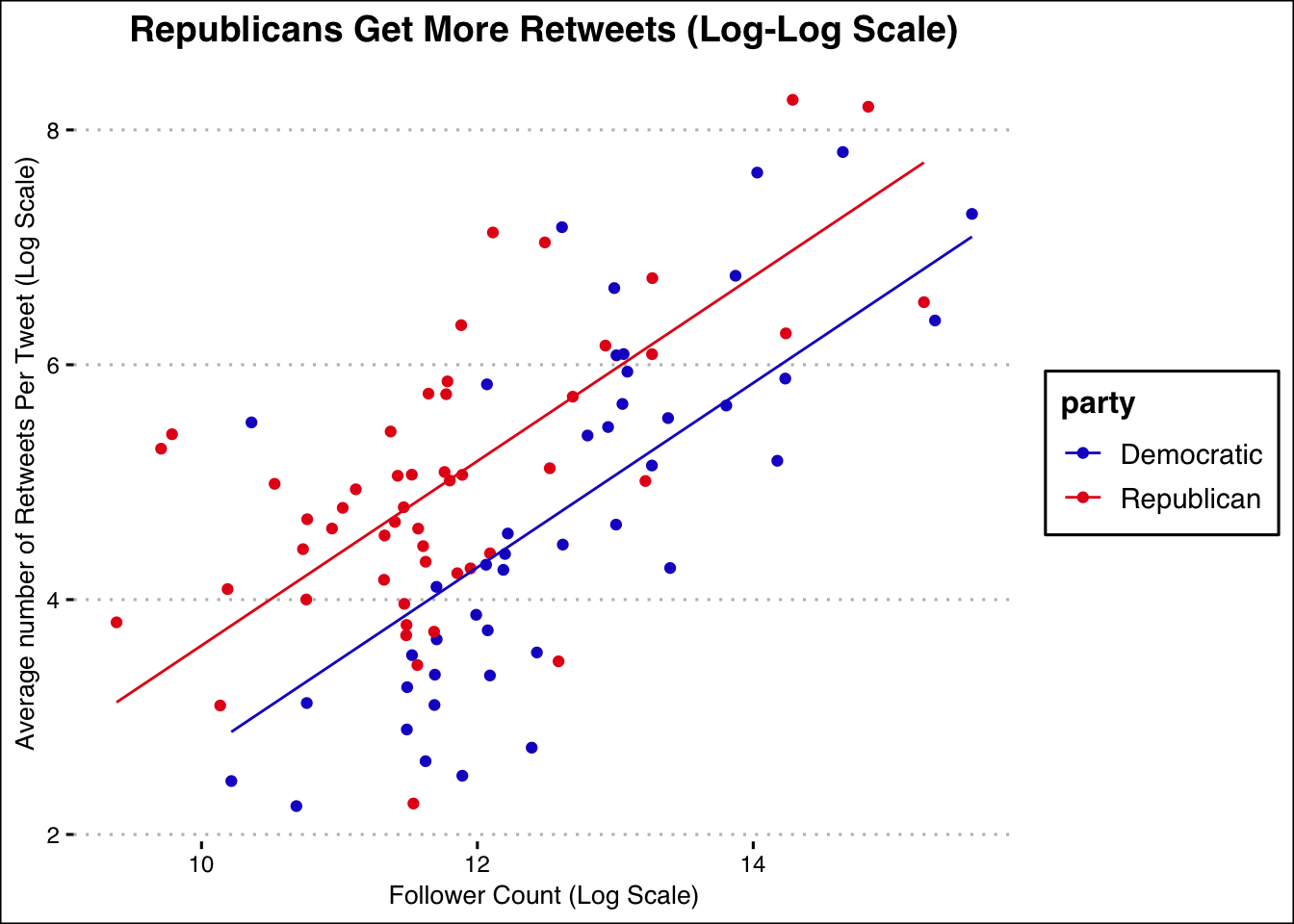

After this sentiment analysis, we became curious and wanted to find out more about how followers engaged differently with different senators of different parties. For each senator, we calculated the average number of retweets per tweet, giving us a rough measure of follower engagement. We plotted each senators’ total number of followers against their average number of retweets on a log-log scale. This gave us a sense of how follower engagement changed with follower count, and allowed us to see differences between party.

We opted to fit two lines of best fit to this data (a line of best fit best desribes the relationship between the two variables of the plot). We used two lines as we wanted one for senators belonging to each of the two major political parties(one line for democrats and another parallel line for republicans.). This allows us to see the expected difference in retweets between a republican and democratic tweet, given a certain follower count. Clearly, we would expect more retweets of a republican’s tweet than a democrat’s tweet.

Over the past four months we observe that the vocabulary used by Republican senitors in tweets is more positive on average than that of Democratic senators, that sentiment expressed by senators of the two parties often moved in tandem but had instances of only one party showing an uptick in sentiment, that Democratic senators are more frequent and verbose in their tweets, and that Republican senators are expected to get more retweets on average than Democratic senators for a given follower count.

This is a fairly limited analysis, and there are a variety of additional areas to explore. We did not investigate the actual subject matter to see if senators tweet about similar topics at the same time. We also didn’t see which topics correlated to the most positive or negative emotions. We are also interested in the average word length per tweet, and whether this differs over time or between parties.

Overall, our analysis seems to yield almost as many questions as we started with:

- Why is it that sentiment for both parties generally moved in tandem?

- What exactly were the specific motivating factors for the large changes in sentiment we observed?

- In what other ways do the tweeting habits of Republican and Democratic senators differ?

These are just some of the questions that can be asked based on what we’ve done here. Of course this is just a surface-level analysis of what is an incredibly complex topic that has been given lots of thought. For more comprehensive analyses, we urge you to look at additional works on sentiment analysis, political rhetoric, and analysis using Twitter.

Additional information on political sentiment analysis using Twitter is also included below:

Analyzing Political Sentiment on Twitter

Sentiment Analysis of Political Tweets: Towards an Accurate Classifier