How to "Hear" Shapes

Psych majors dive into the mind-bending world of sensory substitution.

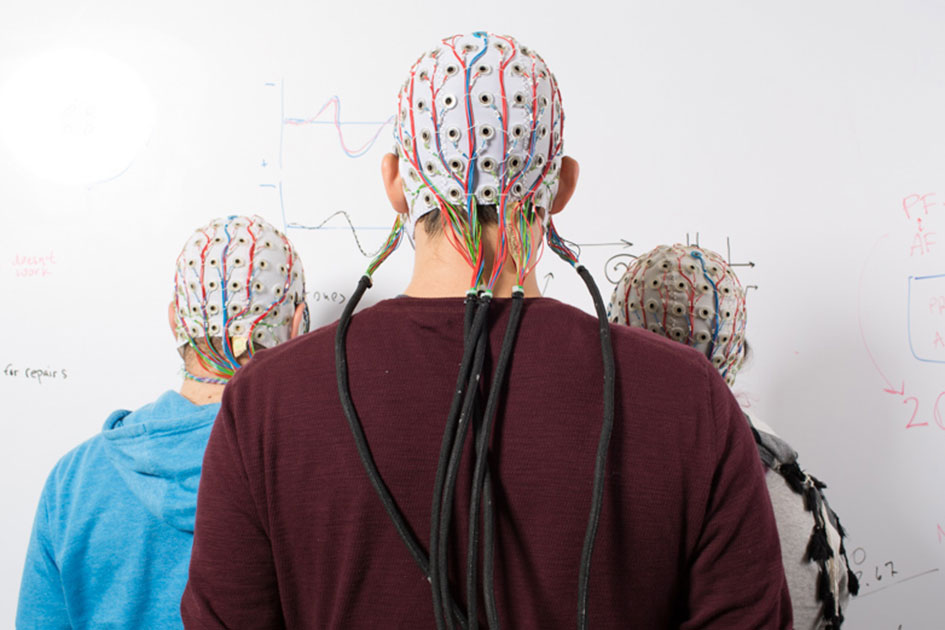

Orestis Papaioannou ’15 takes a cotton swab, pokes it through a hole in a mesh-fabric cap and gently massages a dab of saline gel into the scalp of his experimental subject, an English major named Dan.

As he adjusts the electrodes in the cap, Orestis—who sports a neatly-trimmed beard, flip-flops, and a t-shirt proclaiming “Life of the Mind”—runs through the experimental protocol. “We’re going to show you some shapes and play some sounds at the same time,” he explains. “You’re going to learn how to translate sounds back into shapes.”

Dan takes a sip of black-tea lemonade, settles himself in a chair, and dons a pair of headphones while Orestis and Chris Graulty ’15 huddle around a computer monitor that displays the subject’s brainwaves, which roll across the screen in a series of undulations, jumping in unison when the subject blinks.

The students are investigating the brain’s ability to take information from one perceptual realm, such as sound, and transfer it to another, such as vision. This phenomenon—known as sensory substitution—might seem like a mere scientific curiosity. But in fact, it holds enormous potential for helping people overcome sensory deficits and has profound implications for our ideas about what perception really is.

We’re sitting in Reed’s Sensation, Cognition, Attention, Language, and Perception Lab—known as the SCALP Lab—and this time I’m the subject. My mouth is dry and my palms sweaty. Orestis does his best to help me relax. “Don’t worry,” he smiles. “It’s a very simple task.” Orestis is going to show me some shapes on the computer screen and play some sounds through the headphones. For the first hour, each shape will be paired with a sound. Then he will play me some new sounds, and my job will be to guess which shapes they go with. I enter the soundproof booth, sit down at the keyboard, slip on the headphones, and click “start.”

The first shapes look like the letters of an alien alphabet. Here’s a zero squeezed between a pair of reverse guillemets. At the same time, panning left to right, I hear a peculiar sound, like R2-D2 being strangled by a length of barbed wire. Next comes an elongated U with isosceles arms: I hear a mourning dove flying across Tokyo on NyQuil. Now a triplet of triangles howl like a swarm of mutant crickets swimming underwater.

To call the sounds perplexing would be a monumental understatement. They are sonic gibberish, as incomprehensible as Beethoven played backwards. But after an hour listening to the sounds and watching the shapes march past on the screen, something peculiar starts to happen. The sounds start to take on a sort of character. The caw of a demented parrot as a dump truck slams on the brakes? Strange as it seems, there’s something, well, squiggly about that sound. A marimba struck by a pair of beer bottles? I’m not sure why, but it’s squareish.

Now the experiment begins in earnest. I hear a sound; my job is to select which of five images fits it best. First comes a cuckoo clock tumbling downstairs—is that a square plus some squiggles or the symbol for mercury? The seconds tick away. I guess—wrong. On to the next sound: a buzz saw cutting through three sheets of galvanized tin. Was it the rocketship? Yes, weirdly, it was. And so it goes. After an hour, my forehead is slick with sweat and concentration. It feels like listening to my seven-year-old son read aloud—listening to him stumble over the same word again and again, except that now I’m the one who’s stumbling blindly through this Euclidean cacophony. And yet (swarm of crickets, three triangles) my guesses are slowly getting better. Something strange is happening to my brain. The sounds are making sense.

I am learning to hear shapes.

The interlocking problems of sensation and perception have fascinated philosophers for thousands of years. Democritus argued that there was only one sense—touch—and that all the others were modified forms of it (vision, for example, being caused by the physical impact of invisible particles upon the eye). Plato contended that there were many senses, including sight, smell, heat, cold, pleasure, pain, and fear. Aristotle, in De Anima, argued that there were exactly five senses—a doctrine that has dominated Western thought on the subject ever since. In Chaucer’s Canterbury Tales, for example, the Parson speaks of the “fyve wittes” of “sighte, herynge, smellynge, savorynge, and touchynge.” Even today, a cursory internet search yields scores of websites designed to teach children about the five senses.

The five-sense theory got a boost from psychological research that mapped the senses to specific regions of the brain. We now know, for example, that visual processing takes place primarily in the occipital lobe, with particular sub-regions responsible for detecting motion, color, and shape. There’s even an area that specializes in recognizing faces—if that part of the brain is injured by a bullet, for example, the subject will lose the ability to recognize a face, even his own.

But the Aristotelian notion that the senses are distinct and independent, like TV channels, each with its own “audience” sitting on a couch somewhere in the brain, is deeply flawed, according to Professor Enriqueta Canseco-Gonzalez [psychology 1992–].

For starters, it fails to account for the fact that our sense of taste is largely dependent on our sense of smell (try eating a cantaloupe with a stuffy nose). More important, it doesn’t explain why people with sensory deficits are often able to compensate for their loss through sensory substitution—recruiting one sensory system as a stand-in for another. The Braille system, for example, relies on a person’s ability to use their fingers to “read” a series of dots embossed on a page.

In addition, psychologists and neuroscientists have identified several senses that Aristotle didn’t count, such as the sense of balance (the vestibular system) and the sense of proprioception, which tells you where your arms and legs are without you having to look.

In truth, says Prof. Canseco-Gonzalez, our senses are more like shortwave radio stations whose signals drift into each other, sometimes amplifying, sometimes interfering. They experience metaphorical slippage. This sensory crosstalk is reflected in expressions that use words from one modality to describe phenomena in another. We sing the blues, level icy stares, make salty comments, do fuzzy math, wear hot pink, and complain that the movie left a bad taste in the mouth. It also crops up in the intriguing neurological condition known as synesthesia, when certain stimuli provoke sensations in more than one sensory channel. (Vladimir Nabokov, for example, associated each letter of the alphabet with a different color.)

Canseco-Gonzalez speaks in a warm voice with a Spanish accent. She grew up in Mexico City, earned a BA in psychology and an MA in psychobiology from the National Autonomous University of Mexico, and a PhD from Brandeis for her dissertation on lexical access. Fluent in English, Spanish, Portuguese, and Russian, she has authored a score of papers on cognitive neuroscience and is now focusing on neuroplasticity—the brain’s ability to rewire itself in response to a change in its occupant’s environment, behavior, or injury.

She also knows something about psychological resilience from personal experience. She was working in Mexico City as a grad student in 1985 when a magnitude 8.1 earthquake occurred off the coast of Michoacán, crumbling her apartment building and killing more than 600 of her neighbors. At least 10,000 people died in the disaster.

To demonstrate another example of sensory substitution, she gets up from her desk, stands behind me, and traces a pattern across my back with her finger. “Maybe you played this game as a child,” she says. “What letter am I writing?” Although I am reasonably well acquainted with the letters of the alphabet, it is surprisingly difficult to identify them as they are traced across my back. (I guess “R”—it was actually an “A.”)

Incredibly, this game formed the basis for a striking breakthrough in 1969, when neuroscientist Paul Bach-y-Rita utilized sensory substitution to help congenitally blind subjects detect letters. He used a stationary TV camera to transmit signals to a special chair with a matrix of four hundred vibrating plates that rested against the subject’s back. When the camera was pointed at an “X,” the system activated the plates in the shape of an “X.” Subjects learned to read the letter through the tactile sensation on their backs—essentially using the sense of touch as a substitute for the sense of vision.

Bach-y-Rita’s experiments—crude as they may seem today—paved the way for a host of new approaches to helping people overcome sensory deficits. But this line of research also poses fundamental questions about perception. Do the subjects simply feel the letters or do they actually “see” the letters?

This problem echoes a question posed by Irish philosopher William Molyneux to John Locke in 1688. Suppose a person blind from birth has the ability to distinguish a cube from a sphere by sense of touch. Suddenly and miraculously, she regains her sight. Would she be able to tell which was the cube and which the sphere simply by looking at them?

The philosophical stakes were high and the question provoked fierce debate. Locke and other empiricist philosophers argued that the senses of vision and feeling are independent, and that only through experience could a person learn to associate the “round” feel of a sphere with its “round” appearance. Rationalists, on the other hand, contended that there was a logical correspondence between the sphere’s essence and its appearance—and that a person who grasped its essence could identify it by reasoning and observation.

(Recent evidence suggests that Locke was right—about this, at least. In 2011, a study published in Nature Neuroscience reported that five congenitally blind individuals who had their sight surgically restored were not, in fact, able to match Lego shapes by sight immediately after their operation. After five days, however, their performance improved dramatically.)

On a practical level, sensory substitution raises fundamental questions about the way the brain works. Is it something that only people with sensory deficits can learn? What about adults? What about people with brain damage?

As faster computers made sensory-substitution technology increasingly accessible, Canseco-Gonzalez realized that she could explore these issues at Reed. “I thought to myself, these are good questions for Reed students,” she says. “This is a great way to study how the brain functions. We are rethinking how the brain is wired.”

She found an intellectual partner in Prof. Michael Pitts [psychology 2011–], who obtained a BA in psych from University of New Hampshire, an MS and a PhD from Colorado State, and worked as a postdoc in the neuroscience department at UC San Diego before coming to Reed. Together with Orestis, Chris, and Phoebe Bauer ’15, they bounced around ideas to investigate. The Reed students decided to tackle a deceptively simply question. Can ordinary people be taught how to do sensory substitution?

To answer this, they constructed a three-part experiment. In the first phase, they exposed subjects to the strange shapes and sounds and monitored their brainwaves. In the second phase, they taught subjects to associate particular shapes with particular sounds, and tested their subjects’ ability to match them up. They also tested subjects’ ability to match sounds they had never heard with shapes they had never seen. Finally, they repeated the first phase to see if the training had any effect on the subjects’ brainwaves.

“I was skeptical at first,” Prof. Pitts admits. “I didn’t really think it was going to work.”

The trials took place over the summers of 2013 and 2014, and the preliminary results are striking. After just two hours of training, subjects were able to match new sounds correctly as much as 80% of the time. “That’s a phenomenal finding,” says Pitts. What’s more, the Reed team found that the two-hour training had far-reaching effects. Subjects who were given the test a year later still retained some ability to match sounds to shapes.

Just as significant, the subjects’ brain-waves demonstrated a different pattern after the training, suggesting that the shape-processing part of the brain was being activated—even though the information they were processing came in through their headphones. “Here is the punch line,” says Canseco-Gonzalez. “There is a part of the brain that processes shapes. Usually this is in the form of vision or touch. But if you give it sound, it still can extract information.”

The results—which the Reed team hopes to publish next year—suggest that the brain’s perceptual circuits are wired not so much by sense as by task. Some tasks, such as the ability to recognize shapes, are so important to human survival that the brain can actually rewire itself so as to accomplish them.

Does this mean people can really hear shapes and see sounds, or does it mean that the difference between seeing and hearing is fuzzier than we like to think? Either way, the experiment suggests that the brain retains the astonishing property of neuroplasticity well into middle-age—and that we are only beginning to grasp its potential.

How it Works:

Shapes to Sounds

The system used at Reed relies on the Meijer algorithm, developed by Dutch engineer Peter Meijer in 1992 to translate images into sounds. The vertical dimension of the image is coded into frequencies between 500 Hz-5000 Hz, where higher spatial position corresponds to higher pitch. The horizontal dimension is coded into a 500-ms left-to-right panning effect. The resulting sound—in theory—includes all the information contained in the image, but is meaningless to the untrained ear.

Tags: Academics, Cool Projects, Research, Students, Editor's Picks